Lead Generation Strategies – How to Generate Leads Online

March 15, 2023Four Most Essential Content Marketing Metrics

March 15, 2023A call to action is an essential element of any webpage. It directs potential customers where to go next and helps reduce friction between them and your conversion goal.

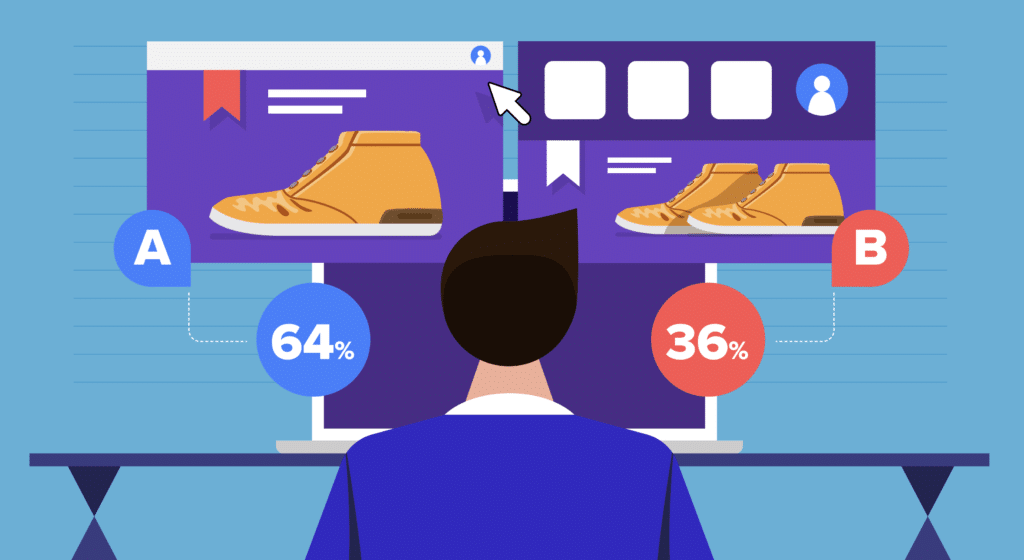

When it comes to calls to action, A/B testing them for optimal performance is critical. This could involve different visual aspects (like form, color or size), wording and placement.

Color

Color is a crucial factor to take into account when conducting an A/B Test. It can significantly affect conversion rates on websites, so it’s essential to determine which color will lead to the most clicks.

An A/B Test is a method of testing two distinct versions of a web page or app to see which performs better. This involves both visible changes (like new UI elements or color modifications) as well as invisible ones (such as how your page loads or recommendations algorithms).

An A/B Test seeks to identify which version will result in more conversions. This can be determined by assessing how well each design performs on specific metrics like conversion rate and clicks.

Another factor to consider when conducting your tests is the frequency. When your tests require enough traffic for them to be reliable, it’s wise to run them over an extended period so that you can collect a large amount of data.

Your test may take some time to produce results, but it’s worth waiting as long as possible in order to obtain the most accurate data. Additionally, incorporate different variables like button colors and sizes into your experiment for greater precision.

Managers often assume they should never change more than one factor at a time, but this isn’t always the case. For instance, if you want to test out different sizes and colors for subscribe buttons, Fung suggests showing the same group of buttons in various sizes and hues.

You might need to present the same group in various fonts and typefaces, depending on the needs of your test. This is why multivariate A/B testing is often done – it provides an accurate representation of results.

A/B testing is an invaluable tool that businesses can use to make better decisions and boost profits. But be cautious when conducting your tests; making errors could significantly skew results, leading to wasted time and resources.

Text in Your CTA A/B Tests

A/B testing is the practice of comparing two versions of an email, newsletter, ad or landing page to see which performs better. You may alter a headline or button, or redesign the entire page in order to test different design elements that have an immediate impact on user engagement and conversion rates.

A/B testing is most commonly done as a split test, where you show one version of the page to half your audience and another version to the other half. Some tools also enable testing multiple variations simultaneously – known as multivariate testing.

If your test set contains a large number of variations, you may struggle to interpret the outcomes clearly. That is because it can be challenging to differentiate between the effects of each alteration on performance.

For instance, if you’re altering the color of your call-to-action (CTA) button, it might also be beneficial to test different types of text and typeface combinations to see which combination works best for users. With this knowledge in hand, you can refine your button copy accordingly.

Often, the modifications you make don’t significantly affect the performance of the original version; however, you won’t know this until after testing. That is why it may be wise to retest your variation after some time has elapsed – such as one or two weeks or months.

Additionally, you might want to limit the number of changes you test simultaneously as this could lead to more interpretation than desired. For instance, changing the size and then color of a CTA button could create confusion and result in a false positive.

When testing multiple variables simultaneously, user segmentation can be beneficial. By creating distinct groups of customers based on age, gender and other traits, you can identify which changes are likely to boost their performance the most.

Once you understand which changes will have the greatest impact on your results, you can take steps to avoid making costly mistakes. But keep in mind that 80% of A/B test lifts are illusionary and only sustainable improvements matter. So be sure to conduct your experiment correctly and utilize all data collected for informed decisions.

Placement

An A/B test is a type of marketing strategy that compares two versions of an advert, email, website or landing page to see which performs better. When done correctly, A/B testing can make a significant impact on how successful your campaign is and lead to more leads, sales and revenue.

To begin an A/B test, form a hypothesis to identify what your website needs to improve its conversion rate. You can do this by analyzing site analytics and watching how visitors engage with it. Heatmaps, clickmaps and scrollmaps provide valuable insights that can help identify where problems lie.

Next, it’s essential to decide how you will conduct the test. For instance, segmentation can be used to examine changes for certain groups of visitors.

This can be achieved by segmenting your site visitors into new visitors and returning ones. Doing this allows you to test changes that only affect new visitors, such as signup forms.

When doing this, it’s essential that the same URL for both versions of a page be maintained so Googlebot doesn’t get confused. To achieve this, add rel=”canonical” attribute to the variation URL and this will allow Googlebot to easily crawl different pages on your site without issue.

A/B tests are an excellent way to optimize the design of your website. This may involve experimenting with fonts, text sizes, navigation and more in order to determine which works best for your business.

For instance, GRENE, an online retailer, used A/B tests to determine the optimal layout for their category pages on mobile devices. By altering the layout to reduce white space and provide users with several products on a single screen, they saw 15% increased in product box clicks and 16 percent growth in conversions.

Although A/B testing can be an effective tool for optimizing your website, only 25% of the time do you witness significant increases in conversions. This is because A/B testing requires extensive analysis and should never be taken lightly.

Visuals

A/B testing is an effective way to ensure your marketing campaign is effective and your customers get the most from it. The process can range from changing text in a CTA to completely redesigning a website, but when making decisions based on data it’s essential that they be made only after results have been analyzed and all findings considered.

One way to accomplish this is through A/B testing software, which will let you experiment with multiple versions of a particular element on your website and report back conversion rates so you can determine which variation performs best.

By understanding your audience better, you can determine what works best for them and make adjustments on your site accordingly. For instance, if the new email design increases sign-ups slightly but decreases page dwell time significantly, perhaps trying a different design with video included could increase efficiency?

Fortunately, A/B testing software can easily handle this for you by separating the traffic that views each version of a campaign and then analyzing its results. From there, you can select the winning variation and quickly deploy it.

Running an A/B test requires sufficient traffic for statistically significant results. With inadequate or excessive traffic, your campaign could fail or yield inconclusive outcomes.

In addition to generating enough traffic, make sure your test runs for an extended period of time so the results can be meaningful. Generally, running a test should last at least two weeks.

Once the results are in, you can interpret them and decide whether to proceed with the new design or keep the original version. Doing this allows for informed decisions about your next project as well as overall marketing strategy. For instance, if the new design increases click-through rate but doesn’t result in more sign-ups, focus on crafting a better CTA or redesigning your landing page overall.

“Always Be Testing.” Isn’t that the big takeaway from the movie Glengarry Glen Ross? Well… close anyway. GB Digital is never satisfied with a set it and forget it mentality. There is always something to do to improve your leads, conversions, win backs. Want a doggedly determined digital partner? Give us a call!